Hello Cloud Marathoners !

As a Cloud Marathoner, the journey never stops!

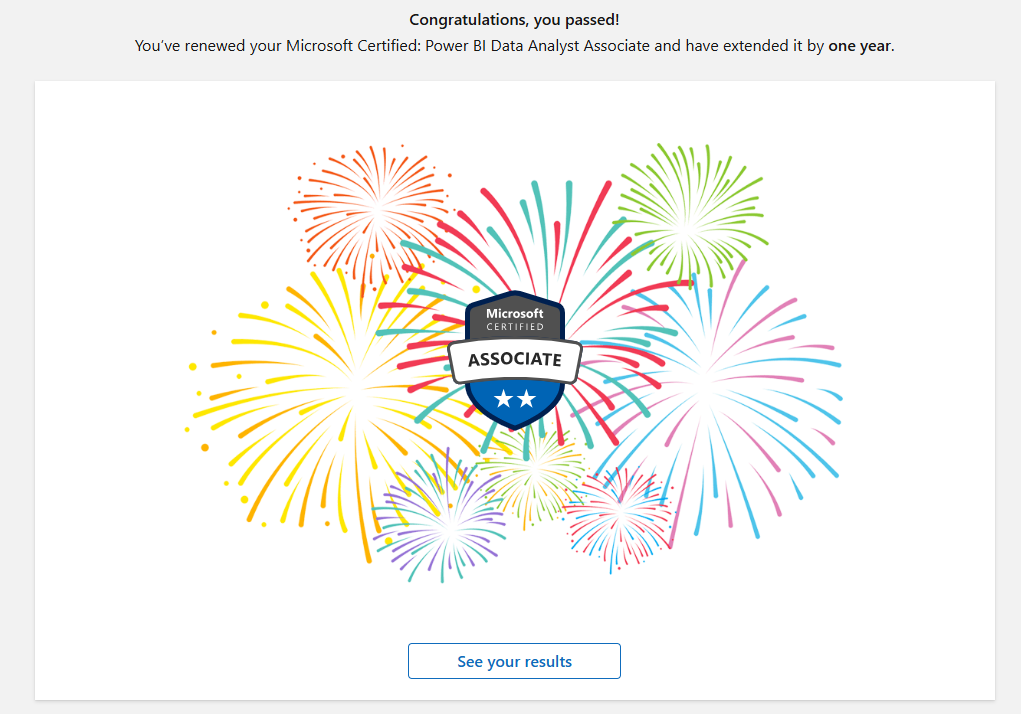

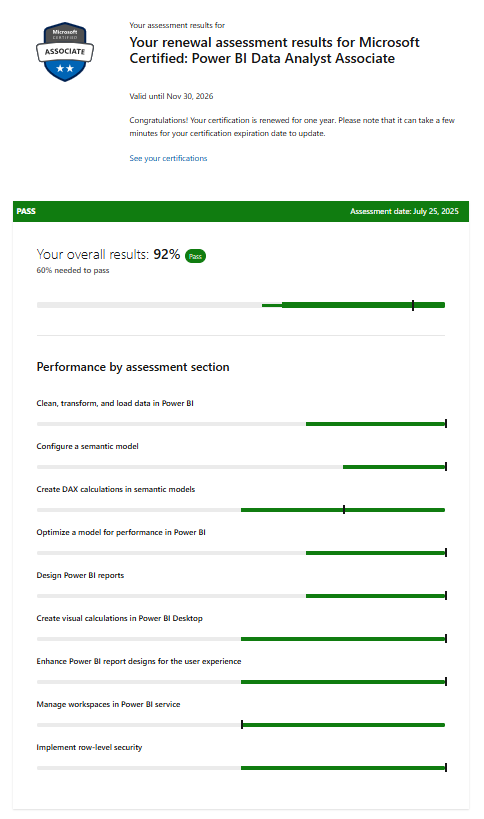

Recently, I renewed my Microsoft Certified: Power BI Data Analyst Associate certification, and I want to share the experience so you can prepare for yours.

Why Renewal Is Important

Microsoft certifications are valid for one year, and renewal ensures your skills stay aligned with the latest Power BI updates. The renewal process is free, online, and proctored—perfect for professionals who want flexibility without scheduling hassles.

The Renewal Process and eligibility

Here’s what I learned from the official Microsoft learn site.

- Eligibility: You can renew within six months before expiration.

- Assessment: A short, open-book online assessment focused on recent Power BI updates.

- Skills Measured:

- Clean, transform, and load data in Power BI

- Configure semantic models

- Use DAX time intelligence and modify filter context

- Optimize models for performance

- Create visual calculations and enhance report designs

- Manage workspaces and secure data access in Power BI Service

- Attempts: Unlimited until expiration, with a 24-hour wait between retakes.

- Validity: Passing extends your certification by one year.

Preparation Tips

Microsoft provides a curated learning collection (about 11 hours) to help you prepare. I recommend:

- Reviewing DAX fundamentals and performance optimization.

- Practicing workspace management and security settings.

- Exploring visual design enhancements for better user experience.

My Experience and Exam results

The renewal was straightforward with the following simple, and known steps:

- Logged into Microsoft Learn, clicked Renew.

- Took the assessment.

- Passed on the first attempt! 🎉

The best part? No stress, just focused learning and validation of current skills.

Key Takeaways

- Start early—don’t wait until the last week.

- Use Microsoft’s official learning paths for prep.

- Treat renewal as an opportunity to refresh your knowledge and stay ahead.

Are you planning to renew your Power BI Data Analyst Certification soon?

Drop your thoughts in the comments or share your experience with the Cloud Marathoner community!